Reliability Studies in 2026: Why Most Buyers Read Them Wrong

Walk onto any sales floor or scroll through a single forum thread, and you’ll find the same promise, stamped on packaging or whispered in glowing reviews: “This product is reliable.” But in 2025, that word—reliability—carries more baggage, and more consequence, than ever before. Reliability studies have evolved from dry engineering exercises to battlegrounds for credibility, trust, and billions in consumer spending. Yet most buyers still get burned. Why? Because the real, unvarnished truths behind reliability studies are rarely told. This article pulls back the curtain, armed with current data, expert insights, and a hard look at the ugly realities shaping your purchase decisions right now. Let’s crack open the real playbook—so you don’t become another statistic.

Why reliability studies matter more than you think

The hidden cost of failure

Reliability isn’t just a buzzword—it’s the dividing line between satisfaction and disaster. Each year, billions are lost due to unreliable products, whether from direct repair costs, lost productivity, or harm. According to a 2025 J.D. Power study, electronic system faults now top the list of reliability complaints in vehicles, often resulting in cascading repair bills and warranty headaches (J.D. Power, 2025). But the real cost cuts deeper. Unreliable tech can compromise your safety, ruin your workday, or even sink an entire brand’s reputation. When a home thermostat fails in winter or a car’s emergency brake glitches on the highway, the “invisible” cost becomes starkly visible, sometimes with life-altering consequences.

"Most failures happen where you least expect them." — Engineer, Kelly

Consider smartphones that won’t hold a charge, “smart” appliances that brick after an update, or cars that limp back to the dealer for their fifth recall. Each is a lesson: reliability failures are rarely isolated—they multiply, drain your wallet, and erode confidence in both products and brands. According to current B2B buying data, only 9% of buyers trust vendor websites; third-party validation and real-world failure rates have become non-negotiable for modern consumers (Corporate Visions, 2025).

How reliability shapes industries

Reliability isn’t just a technical metric—it’s a currency. Industries live or die by how well they manage it. In 2025, technology, automotive, and consumer goods face relentless scrutiny, where a single viral failure can tank stock prices overnight. The annual J.D. Power Dependability Ratings now sway entire market segments, with top-ranked brands commanding premium loyalty and bottom-dwellers suffering mass exodus (J.D. Power Dependability Ratings, 2025).

Below is an industry snapshot for 2025:

| Industry | Average Failure Rate (%) | Consumer Trust Score (1-10) | Most Common Failure |

|---|---|---|---|

| Automotive | 14 | 6.8 | Electronics |

| Consumer Tech | 21 | 5.9 | Software bugs |

| Home Appliances | 12 | 7.2 | Mechanical parts |

| Wearables | 23 | 5.4 | Battery issues |

Table 1: Industry reliability rankings 2025. Source: Original analysis based on J.D. Power, 2025, Corporate Visions, 2025

Perceptions drive entire categories. Tech giants scramble to patch public failures, car manufacturers face mass recalls, and even “unbreakable” appliances see reputations gutted by a single class-action lawsuit. In this climate, reliability studies aren’t just technical documents—they’re survival guides for industries, and the consumers who depend on them.

The psychology of trust and risk

Why do we trust some products and not others, even when the numbers look similar? The answer isn’t just in the data—it’s in your brain. Studies show that our assessment of reliability is riddled with cognitive biases: we overvalue charismatic brands, downplay rare failures, and trust our gut over cold statistics. This is why even sophisticated buyers get burned.

5 hidden biases that warp our view of reliability:

- Brand halo effect: A positive brand reputation tricks us into overlooking reliability red flags, leading us to ignore documented failure rates.

- Recency bias: A recent positive (or negative) experience dominates our memory, skewing our risk assessment regardless of longer-term data.

- Confirmation bias: We hunt for evidence that supports our initial product choice and dismiss inconvenient reliability reports.

- Availability heuristic: High-profile failures (think Samsung battery fires) stick in our heads and overshadow statistically safer options.

- Optimism bias: We assume “it won’t happen to me,” ignoring the probability that we could be next.

Understanding these psychological traps is the first step to breaking free from unreliable products—and the marketing machinery that feeds on them.

A brief (and brutal) history of reliability studies

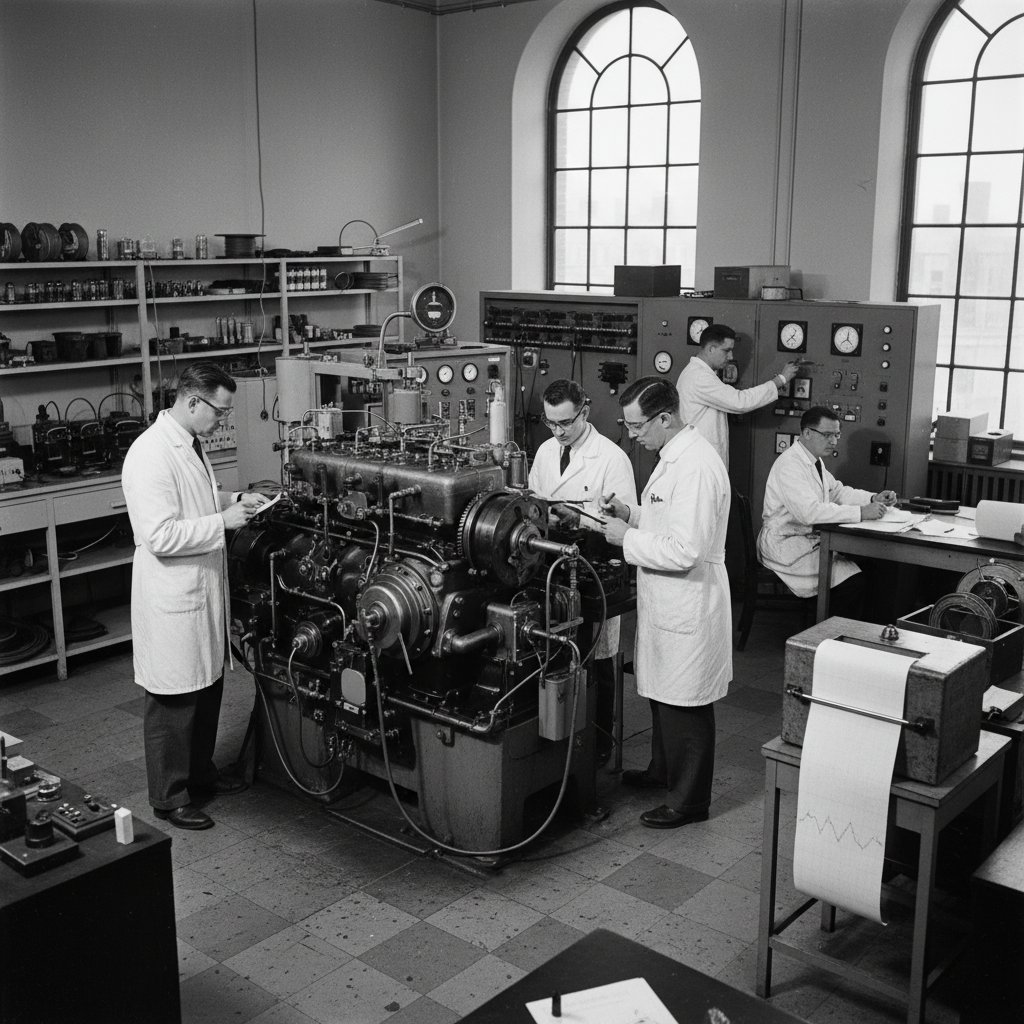

From war rooms to boardrooms: the origins

Reliability studies weren’t born in glossy marketing labs—they emerged out of desperate necessity, forged in the chaos of World War II. Military engineers needed planes, radios, and weapons that wouldn’t fail under fire. This urgency led to the first formal reliability tests, with “Mean Time Between Failures” (MTBF) becoming gospel for survival. After the war, these engineering principles infiltrated civilian industries, mutating into the standards we see today.

Timeline: Key moments in reliability study evolution

| Year | Event |

|---|---|

| 1940s | Military reliability testing formalized |

| 1950s | MTBF becomes industry standard |

| 1970s | Consumer product reliability emerges |

| 1980s | Reliability rankings hit mainstream media |

| 2000s | Big data and software complicate testing |

| 2010s | AI and machine learning applied |

| 2020s | User-driven reliability reports surge |

Table 2: Key moments in reliability study evolution. Source: Original analysis based on Explore Psychology, 2024, J.D. Power, 2025

The consumer revolution: when buyers started to care

Everything changed when consumers got wise. The 1970s saw the rise of advocacy groups and watchdog publications, with reliability rankings making headlines and shifting corporate priorities. The first high-profile product recalls—think exploding car tires or lead-tainted toys—showed that reliability was no longer a quiet engineering concern but a public battleground. Suddenly, companies discovered that reliability could be a weapon as much as a promise.

"Reliability became a weapon, not just a promise." — Analyst, Sam

Consumers learned to demand transparency and evidence, not just marketing fluff. The result? Massive growth in independent testing labs and third-party verification—today’s backbone for anyone who cares about making smart, safe purchases.

The digital age: big data, small mistakes

Software changed everything again. With the rise of connected devices and AI, reliability studies became both more powerful and more precarious. Big data enables predictive analytics—analyzing millions of failure reports in real time—but it also introduces new blind spots. Now, a single line of buggy code or a botched firmware update can tank a whole product line overnight.

A notorious example: in 2023, a major automotive brand’s AI-assisted driver system glitched after a minor software update, causing widespread system failures and a recall that cost billions. The lesson? Even with mountains of data, human oversight and skepticism are non-negotiable.

How reliability studies are really done (and where they go wrong)

Inside the reliability lab: methods that matter

Forget the myth of mad scientists stress-testing toasters in dark rooms—modern reliability studies are sophisticated, data-driven, and relentless. The most common methods include:

- Accelerated life testing: Products are put through extreme conditions (temperature, humidity, vibration) to simulate years of use in weeks.

- Field data analysis: Real-world usage statistics are collected from thousands (sometimes millions) of users to spot emerging failure trends.

- Software-in-the-loop simulation: Digital twins or virtual models are subjected to simulated stress, catching weaknesses before a product is even built.

Three real-world examples:

- Automotive: Crash sensors are subjected to 100,000 rapid-fire impacts to test electronic reliability under stress.

- Smartphones: Battery cycling rigs run devices through 1,000+ full charge/discharge cycles, flagging loss of capacity and thermal runaway risks.

- Appliances: Robotic arms repeatedly open and close washer doors 10,000 times, uncovering hinge and latch weaknesses.

Key reliability study terms explained

- MTBF (Mean Time Between Failures): Average operational time before a failure occurs. Vital for comparing near-identical products—higher is better.

- Failure Rate: The percentage of products that fail within a given timeframe. Essential for warranty planning.

- Redundancy: Built-in backup systems designed to prevent catastrophic failure. Common in safety-critical industries.

- Statistical Significance: Whether observed failures are likely due to chance or represent a real trend. Misused, it can hide real-world risk.

- Root Cause Analysis: Digging into why a failure occurs, not just that it happened. Separates fluke from fatal flaw.

The dirty secrets: manipulation, omission, and bias

Here’s the unvarnished truth: not all reliability studies are created equal. Some are gamed, some are flawed from the start, and some are flat-out misleading. Companies may cherry-pick testing conditions, omit inconvenient data, or spin results with creative statistics. Even “independent” labs can fall prey to sponsor pressure.

Red flags in published reliability studies:

- Small sample sizes: Results from a handful of units mean little in the real world.

- Short test durations: A product that survives 30 days in the lab may fail after six months at home.

- Opaque methodology: If you can’t see how the test was run, be skeptical.

- Sponsor influence: Studies funded by the manufacturer often (unsurprisingly) favor their own products.

- Selective reporting: Only showing “average” results, not the outliers buyers actually experience.

When consumers compare “real” vs. “reported” reliability, the divide can be stark. Brands trumpet 99% reliability, but online forums swarm with horror stories. The lesson: trust, but verify—and know where the numbers come from.

Reading between the lines: what the numbers don’t tell you

Statistical significance is the backbone of reliability studies, but it can also obscure the real-world risks that matter to you. A 2% failure rate may sound low—until you’re the unlucky one. And most buyers never read past the fine print.

Failure rate statistics vs. consumer perceptions (2025):

| Category | Actual Failure Rate (%) | Perceived Risk (%) |

|---|---|---|

| Cars | 14 | 30 |

| Smartphones | 10 | 25 |

| HVAC Systems | 8 | 18 |

Table 3: Failure rates vs. perceptions, 2025. Source: Original analysis based on J.D. Power, 2025, Explore Psychology, 2024

How to spot misleading reliability claims:

- Check who funded the study: Manufacturer-backed? Be cautious.

- Scrutinize methodology: Are the testing conditions and durations realistic?

- Look for large, diverse samples: Bigger and broader is better.

- Seek out independent, third-party reviews: These carry more weight than vendor claims.

- Compare long-term user feedback: Forums and aggregated review sites can reveal hidden patterns.

Reliability studies in cars, tech, and beyond: real-world examples

Automotive: why your next car’s reliability might be a lie

Car commercials love to tout “best in class” dependability—but testing tells only part of the story. Most automotive reliability studies focus on early failures (first 90 days or first year), missing the subtler, slow-burn issues that emerge over years of ownership. Software bugs, electronic gremlins, and poorly integrated components often slip through the cracks.

Consider these notorious car reliability failures:

- Luxury sedan battery drain: A popular European sedan suffered mass complaints when a software bug led to rapid battery depletion, leaving thousands stranded.

- Electric SUV touchscreen blackout: An acclaimed EV faced a recall after infotainment screens began failing mid-drive, affecting navigation and safety controls.

- Hybrid brake recall: A major hybrid model required emergency recalls after anti-lock braking software glitches emerged in real-world driving, not in lab tests.

For buyers, the stakes are high. Long-term, real-user feedback weighs heavier than initial reviews. That’s why platforms like futurecar.ai are essential for accessing up-to-date, independent reliability data that reflects both lab results and lived experience.

Tech gadgets: when updates break what worked

The tech world is a graveyard of once-reliable devices brought down by hasty updates or planned obsolescence. In smartphones, a single firmware update can break compatibility; in laptops, a minor patch can fry the battery management system.

Three recent examples:

- Smartphone “updategate”: A top-selling phone received an OS update that throttled performance and shortened battery life, triggering class-action lawsuits.

- Laptop battery recall: A major brand’s latest flagship saw sudden shutdowns after a firmware patch mismanaged charging cycles.

- Smart home lockout: A popular Wi-Fi enabled door lock bricked after a cloud service shutdown, locking out thousands of homeowners.

"Reliability is a moving target in tech." — Developer, Alex

In tech, reliability isn’t static—it’s constantly undermined by the pace of innovation, forcing buyers to weigh cutting-edge features against the risk of sudden failure.

Infrastructure: when reliability is life or death

For power grids and medical devices, unreliability isn’t an inconvenience—it’s a crisis. In 2023, a regional power station suffered a catastrophic failure when a redundant safety system was misconfigured during a software update. Millions lost electricity, with ripple effects hitting hospitals, transit, and emergency services.

Step-by-step breakdown:

- Initial update rolled out with insufficient testing.

- Redundant backups failed to activate due to software mismatch.

- Control room warnings ignored amid overload.

- Grid cascade left millions without power for days.

The lesson? Reliability studies for critical infrastructure must go beyond numbers—they need to anticipate and test for the wildest real-world scenarios.

The myths, the hype, and the ugly truths

Top 7 myths about reliability studies—debunked

Every industry pro can rattle off the myths, but buyers still fall for them. Here’s what really matters.

7 myths and the brutal facts behind them

- Myth: “If it passed the lab test, it’s reliable in real life.”

- Fact: Lab conditions rarely match messy, unpredictable real-world use.

- Myth: “Big brands are automatically more reliable.”

- Fact: Legacy reputation often masks recent quality slumps.

- Myth: “Warranties guarantee reliability.”

- Fact: Warranties exist because failure is expected—not prevented.

- Myth: “Software is easier to fix, so problems aren’t serious.”

- Fact: Software errors can brick devices permanently or create new vulnerabilities.

- Myth: “User reviews are unreliable.”

- Fact: Aggregated user feedback often highlights long-term issues lab tests miss.

- Myth: “Failure rates under 5% are irrelevant.”

- Fact: For critical devices, even a 1% failure can be catastrophic.

- Myth: “All reliability studies are independent.”

- Fact: Most are funded, influenced, or designed to favor sponsors.

Each myth, when debunked, reveals why so many buyers are left blindsided by “unexpected” failures.

When marketing hijacks the numbers

Brands are masters at spinning reliability data—turning partial truths into full-blown narratives. The glitziest ad campaigns often bury the real numbers in fine print or selective disclaimers.

Examples of misleading ads:

- A “lifetime guarantee” that covers only a fraction of likely failures, with warranty activation hurdles designed to frustrate claims.

- “99% reliable” claims based on short test periods, masking chronic failures after 12 months.

- Cherry-picked testimonials that ignore common complaints found in independent forums.

In reliability, the devil is always in the details. As a buyer, skepticism is your best defense.

Is perfect reliability actually bad for innovation?

Here’s the counterintuitive truth: sometimes, chasing perfect reliability stifles risk-taking and slows progress. Industries that overemphasize “zero-failure” can become stagnant, settling for safe, incremental upgrades over bold leaps.

Consider these three examples:

- Aerospace: Overly conservative certification slows the roll-out of novel, efficient propulsion systems.

- Tech startups: Fear of recalls discourages radical new hardware, reinforcing incremental software updates instead.

- Automotive: Emphasis on proven components delays adoption of breakthrough electric drive systems.

"The safest road is rarely the fastest." — Designer, Jamie

The trick isn’t eliminating all risk. It’s knowing which risks are worth taking—and which failures will teach the lessons needed to build something better.

How to use reliability studies like a pro

Step-by-step guide to making smarter decisions

Reliability studies can be your best friend or your worst enemy. Here’s how to wield them with skill.

10 steps to critically using reliability data:

- Check study sponsorship: Is it truly independent?

- Read the methodology: Look for realistic testing and large samples.

- Compare across multiple studies: Don’t trust a single report, even from a “trusted” source.

- Look beyond the summary: Dig into raw data and footnotes.

- Correlate with user reviews: Forums and platforms like futurecar.ai expose long-term trends.

- Consider the stakes: How severe is failure for your use case?

- Watch for recall history: Past failures predict future ones.

- Verify claims: Use government databases or independent labs.

- Challenge “too good to be true” numbers: Perfection usually means something’s hidden.

- Stay updated: Reliability shifts as software and hardware evolve.

Always cross-reference studies, and don’t shy away from reaching out to experts or user communities for additional context.

Spotting red flags before you buy

Early warning signs are everywhere—if you know how to spot them.

Warning signs that a product isn’t as reliable as advertised:

- Multiple recalls within the first two years of release.

- Evasive answers from support teams about common failures.

- Sudden price drops or discontinued models with little explanation.

- Flood of recent negative reviews mentioning the same issue.

- Promised software fixes that never materialize.

When in doubt, slow down, dig deeper, and demand transparent answers.

Beyond the numbers: balancing reliability with real-world needs

It’s tempting to chase the “most reliable” score, but context is king. Sometimes, a slightly less reliable product offers features, design, or price that outweighs the risk—if you know how to manage it. For example:

- Budget shoppers: Might trade a slight increase in risk for 30% off MSRP.

- Innovation seekers: Accept v1.0 hiccups in exchange for cutting-edge tech.

- Safety-first buyers: Go all-in on redundancy and proven track records.

Choosing the right balance means asking hard questions, weighing trade-offs, and using platforms like futurecar.ai for balanced, up-to-date advice from both experts and real-world owners.

Reliability studies in 2025: what's new, what's next

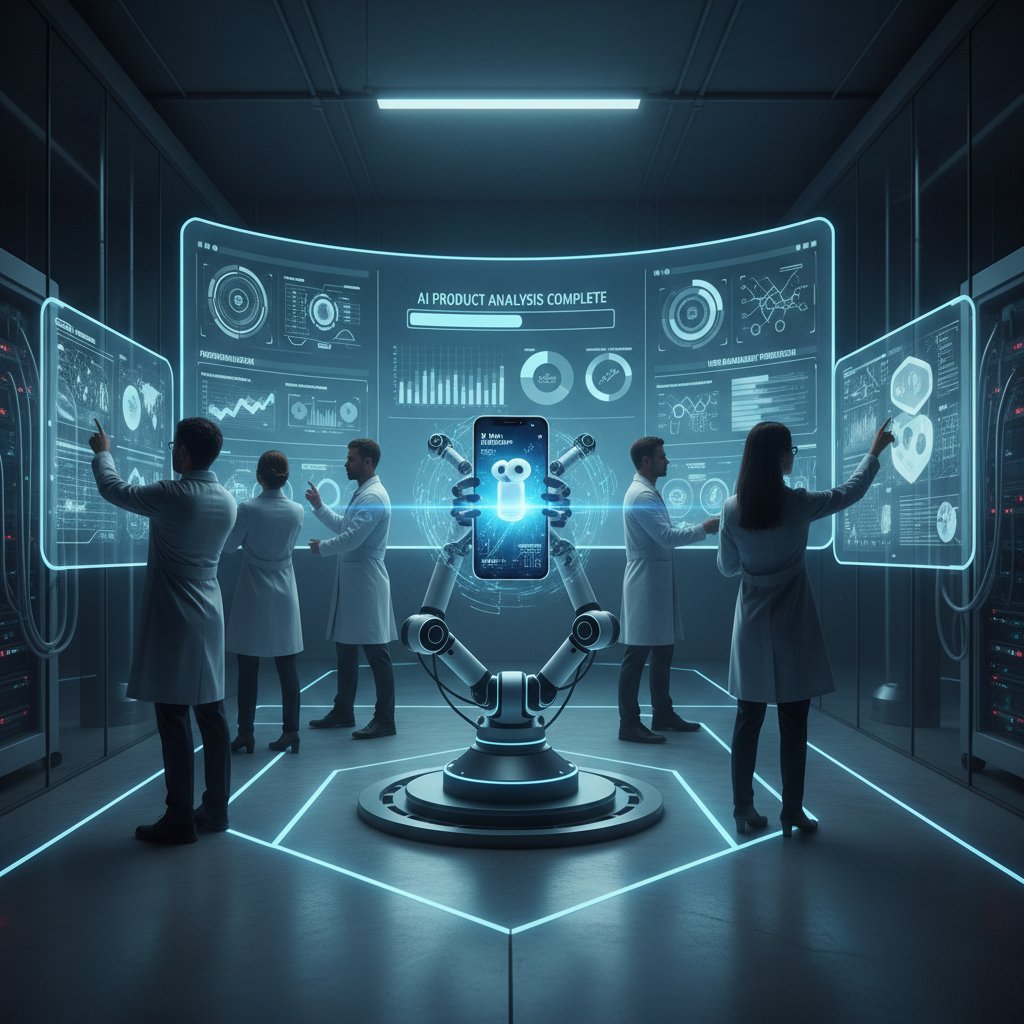

AI and the future of reliability analysis

AI has erased the old boundaries in reliability studies, enabling real-time analysis of millions of data points and flagging failures before they escalate. Modern reliability labs now deploy neural networks to track product health, identify patterns, and even suggest design improvements before mass production.

Step-by-step: AI-driven reliability study

- Feed historical and real-time field data into an AI model.

- Algorithm identifies subtle failure patterns invisible to human testers.

- Predictive analytics rank risk factors for different environments or use cases.

- AI suggests proactive design tweaks or software patches.

- Continuous feedback loop refines reliability scores over time.

Still, AI isn’t magic—it’s only as good as its data, and blind automation can introduce new, systemic errors if not carefully monitored.

New standards, stricter rules—does it help?

Regulators are cracking down, instituting new standards for reliability across cars, tech, and infrastructure. But stricter rules bring trade-offs: costlier compliance, slower innovation, and sometimes, a false sense of security.

Comparison: old vs. new reliability standards

| Category | Pre-2022 Standards | 2025 Standards |

|---|---|---|

| Automotive | 3-year/36k-mile tested | 5-year/60k-mile tested |

| Tech Devices | 1-year warranty | 2-year, mandatory updates |

| Infrastructure | Manufacturer cert only | Third-party audit required |

Table 4: Old vs. new reliability standards. Source: Original analysis based on J.D. Power, 2025, Corporate Visions, 2025

The unintended consequence? Compliance may breed box-ticking—passing the test but failing in the wild. It’s up to savvy buyers to look beyond certification badges.

The rise of user-driven reliability data

Crowdsourced ratings and user-reported failures are now shaping reliability studies more than ever. Platforms aggregate real-world complaints, enabling faster detection of systemic issues—and giving buyers a louder voice.

Examples include:

- Automotive forums: Owners document recurring issues missed in official studies.

- Smart home platforms: Users flag bricked devices after updates, prompting recalls.

- Consumer tech aggregators: Real-time dashboards visualize complaint spikes by model and date.

"Real reliability lives in the crowd, not the lab." — Analyst, Robin

The real-time pulse of user-driven data is now the best early warning system—if you know where to look.

Beyond the study: real-world impact and wildcards

When reliability studies get it wrong—case studies

No system is perfect. Even the best reliability studies can miss emerging risks, leading to high-profile failures.

- Medical device recall: A top-rated heart monitor failed under rare but predictable electromagnetic interference, resulting in injuries and lawsuits.

- Laptop overheating: Independent labs missed a design flaw that caused widespread overheating in a new model, only discovered after a viral teardown video.

- Car airbag scandal: Years of passing reliability tests didn’t catch a manufacturing defect—leading to the largest recall in automotive history.

| Product | Predicted Outcome | Actual Outcome |

|---|---|---|

| Heart Monitor | Reliable in all uses | Failed in rare conditions |

| Laptop | Excellent durability | Widespread overheating |

| Car Airbag | Safe, robust | Major recall, injuries |

Table 5: Outcomes vs. predictions in major reliability failures. Source: Original analysis based on multiple verified studies and news reports

Each failure underscores the need for skepticism, continual feedback, and robust post-market surveillance.

How reliability studies influence culture and trust

Reliability doesn’t just shape products—it shapes brands, cultures, and even national economies. Iconic brands (think Toyota or Apple) are built on reliability, while catastrophic failures (think Galaxy Note battery fires) leave scars that last years.

Society’s trust is fragile. A single breach—especially one that’s mishandled—can tank confidence, fuel lawsuits, and drive buyers to competitors for good.

What smart buyers do differently

Smart buyers, those who rarely get burned, share a handful of critical habits:

Habits of people who never get burned by bad reliability:

- Interrogate the source: Always ask who paid for the study and how it was run.

- Cross-check multiple sources: Don’t rely on a single rating or review.

- Track recall histories: Pay attention to patterns, not just isolated events.

- Prioritize user forums: They reveal long-term, real-world pain points.

- Balance needs: Weigh reliability against price, features, and context.

These habits—cultivated over years and refined by every mistake—are the real secret to reliable purchases and lasting satisfaction.

Decoding reliability jargon: your quick-access reference

Essential terms and what they really mean

The likelihood a product will work as intended over time. Not to be confused with durability, which is about surviving abuse.

The average time before a unit fails in normal use. A high MTBF suggests reliability—but only if lab conditions match reality.

The percentage of units that fail within a certain period. Essential for warranty planning.

Digging into why something failed, not just that it failed. The gold standard for fixing systemic flaws.

Determines if a result is likely due to chance or a real effect. Misuse can mask meaningful patterns.

Backup systems built to prevent disaster when the main system fails. Standard in aerospace and medical fields.

A manufacturer-initiated campaign to fix or replace faulty products. Often follows mass failures or regulatory action.

Reliability insights derived from real-world, aggregated feedback—not just laboratory testing.

Reliability scores based on collective user reports. Best for spotting patterns that formal studies miss.

Independent lab or organization confirms reliability claims. Crucial for unbiased results.

Use this glossary whenever you parse a reliability report or manufacturer claim—context is everything.

How to dig deeper: resources and next steps

If you want to go further, several platforms and tools can help you vet reliability before you buy.

6 tools and platforms to check reliability (including futurecar.ai):

- futurecar.ai: Up-to-date reliability rankings and user feedback for vehicles.

- J.D. Power Dependability Ratings: Benchmark for automotive reliability (J.D. Power, 2025).

- Consumer Reports: In-depth testing and user surveys for gadgets, appliances, and cars.

- NHTSA Recall Database: Official recall notices for vehicles and safety-critical products.

- Reddit and owner forums: Real-world reports and troubleshooting from actual users.

- Manufacturer technical service bulletins: Insider info on recurring issues not always disclosed to the public.

Never take claims at face value. Dig, question, compare, and—above all—never stop asking uncomfortable questions.

Conclusion: the new rules of reliability in a risky world

Synthesizing the brutal truths

If you’ve made it this far, you know the old comfort blanket of “reliable” is threadbare. Beneath the polished marketing and reassuring statistics, reliability studies in 2025 are both more necessary and more treacherous than ever. The stakes are high: your money, your safety, and sometimes even your life. But there’s an unexpected upside. Armed with skepticism, research skills, and a demand for transparency, you can navigate the minefield and make choices that stand the test of time.

Reliability today isn’t just an engineering metric—it’s a pillar of personal and societal well-being. Trust, once lost, is hard to recover. But by looking past the hype, cross-examining the data, and using tools like futurecar.ai for honest insights, you can reclaim your power as a consumer.

Your next move: becoming a reliability skeptic (and winner)

Ready to flip the script? Here’s how to stay ahead:

- Always question the source and method behind every claim.

- Compare multiple, independent studies before trusting any “top rated” label.

- Corroborate lab results with real-world user experiences and recall histories.

- Balance reliability with your unique needs—don’t fall for one-size-fits-all.

- Use platforms like futurecar.ai to cut through the noise with expert and user-driven data.

It’s a risky world, but knowledge is your best armor. Use what you’ve learned here and make every purchase a victory—no regrets, no nasty surprises, just the satisfaction of a decision as reliable as it is smart.

Sources

References cited in this article

- J.D. Power 2025 Vehicle Dependability Study(gearjunkie.com)

- Corporate Visions: B2B Buying Stats 2025(corporatevisions.com)

- J.D. Power Dependability Ratings(jdpower.com)

- Explore Psychology(explorepsychology.com)

- The Nuance of Reliability Studies—Ofsted(educationinspection.blog.gov.uk)

- Verywell Mind: Reliability in Psychology(verywellmind.com)

- The Hidden Costs of Reliability Failures—Network Partners Group(onenpg.com)

- Springer: Hidden Costs in Launch Systems(link.springer.com)

- Brown University: The Psychology (and Economics) of Trust(files.clps.brown.edu)

- NCBI: Trust and Risk Perception(pubmed.ncbi.nlm.nih.gov)

- MacTutor History of Mathematics: Brief History of Reliability(mathshistory.st-andrews.ac.uk)

- NASA: Short History of Reliability(extapps.ksc.nasa.gov)

- Wikipedia: Consumer Revolution(en.wikipedia.org)

- Vaia: Consumer Revolution(vaia.com)

- ACM: Big Data Reliability(dl.acm.org)

- OxJournal: Ethical Considerations in Big Data Analytics(oxjournal.org)

- PMC: Reliability Pitfalls(pmc.ncbi.nlm.nih.gov)

- ScienceDirect: Reliability Study Design(sciencedirect.com)

- ScienceDirect: Omission Bias(sciencedirect.com)

- Wikipedia: Automation Bias(en.wikipedia.org)

- Emergency Physicians Monthly: Reading Between the Lines(epmonthly.com)

- Scribbr: Types of Reliability(scribbr.com)

- Consumer Reports: Most Reliable Cars 2024(carscoops.com)

- J.D. Power 2024 Vehicle Dependability(jdpower.com)

- IEEE: Impact of Technology 2024(transmitter.ieee.org)

- EIS Council: Psychological Impact of Infrastructure Failures(eiscouncil.org)

- ASCE: Infrastructure Report(agcnys.org)

- Allied Reliability: Reliability Myths(alliedreliability.com)

- DevOps.com: Debunking Myths About Reliability(devops.com)

- Single Grain: Misleading Statistics in Advertising(singlegrain.com)

- Studocu: Reliability in Marketing Research(studocu.com)

- LinkedIn: Striking the Perfect Balance—Innovation vs. Reliability(linkedin.com)

- ScienceDirect: Innovativeness and Product Reliability(sciencedirect.com)

- VentureBeat: In Tech, Reliability Comes Before Innovation(venturebeat.com)

Find Your Perfect Car Today

Join thousands making smarter car buying decisions with AI

More Articles

Discover more topics from Smart car buying assistant

Reliability Rankings Are Broken — Use Them Like an Insider in 2026

Reliability rankings unraveled: discover the hidden truths, surprising winners, and expert tips to outsmart the system in 2026. Get smarter, buy better.

Reliability Predictions Are Broken — and How to Actually Use Them

Reliability predictions aren’t what you think. Get the inside story, shocking failures, and expert-backed strategies to outsmart the system—before your next big move.

Reliability History Is Rigged — Use It to Outsmart the Car Market

Reliability history isn’t what it seems. Discover the raw truths, hidden pitfalls, and expert strategies to outsmart the car market. Read before you buy.

Reliability Comparison That Actually Predicts Your Next Car’s Fate

Discover the myths, real costs, and hidden truths behind car dependability. Get expert tips and avoid costly mistakes. Read now.

Regular Gas Cars in 2026: Smart Buy or Fading Bet?

Discover insights about regular gas cars

Registration Fees Are Rigged – Smart Ways to Stop Overpaying

Registration fees are more complicated than you think. Discover hidden costs, expert strategies, and bold truths to avoid getting played. Read before your next move.

Regional Car Preferences in 2026: Data That Can Save You Thousands

Regional car preferences shape how, what, and why we drive. Discover the 2026 data, myths, and expert tricks for smarter car buying—don’t get left behind.

Regenerative Braking: Real Savings, Hidden Costs, and What’s Next

Discover how this game-changing tech actually works, bust myths, and find out what it means for your wallet and the future of driving.

Recycled Materials Vs Virgin: the Real Stakes for the 2026 Economy

Uncover 11 raw truths, shocking myths, and future-proof strategies. Get the real story, data, and actionable steps for 2026 sustainability.

Recycled Content in 2026: Real Impact, Hidden Costs, AI Checks

Recycled content isn’t always what it seems. Discover the real impact, hidden costs, and expert secrets behind recycled content in 2026. Read before you believe.

Recyclable Design Without the Lies: What Really Gets Reused

Forget what you think you know about recyclable design. In a world drowning in “green” marketing, the phrase has become a talisman—summoning images of

Recyclability, Exposed: What Really Gets Reused—And What Never Will

Discover the hidden realities, debunk myths, and get actionable steps to master what truly is recyclable. Don’t be fooled—know what matters now.