Natural Language, Real Power: Who Controls the AI Conversations?

Pull up a chair and brace yourself: everything you think you know about “natural language” is about to get dismantled. Language is supposed to be the easiest thing in the world—an instinct, a birthright, the invisible glue holding society together. In reality, it’s a minefield: coded, manipulated, commodified, and, increasingly, hijacked by machines that don’t care what you meant—only what you probably meant. From the ancient cave walls to the algorithmic feeds controlling your news, natural language has never been “natural.” It’s a weapon, a market, and a source of power. In 2024, as AI-driven conversations shape everything from elections to your next car, the cost of ignoring the raw truth behind language is higher than ever. If you think words are harmless, think again. Here are 11 brutal truths about natural language that will change how you think, communicate, and interact with technology—before it rewrites you.

The myth of natural language: are we really understood?

Why ‘natural’ language is anything but natural

Language never just “was.” It’s always been a battleground, shaped by the victors, the censors, the inventors, and now, the coders. The idea that there’s something pure or innate about the way humans speak is a dangerous myth—one that gets shattered every time a new technology or regime upends the rules. Linguists have documented how empires, migrations, and even the humble printing press rewired vocabulary, syntax, and meaning. Today, algorithms sift and sort our words, often amplifying certain voices while drowning out others.

"Language has always been a battleground, not a birthright." — Maya

Hidden influences on language include

- Power: Who gets to define what words mean changes how entire societies think.

- Technology: Every communication leap—from writing to the internet—reshapes language’s form and function.

- Migration: Movement of people scrambles linguistic boundaries, spawning new dialects and hybrid expressions.

- Censorship: Political or corporate suppression can erase words, ideas, and histories.

- AI-driven bias: Algorithmic models now reinforce—or challenge—existing prejudices, sometimes invisibly.

Natural language is a living artifact, molded by everything but nature itself.

How machines ‘think’ they understand you

NLP—or natural language processing—promises machines that “get” you. The reality is both more impressive and more limited than the hype suggests. At its core, NLP chops up your sentences into tokens, runs them against oceans of data, and predicts what comes next using statistics, not understanding. Large Language Models (LLMs) like GPT-4 “autocomplete” the universe, making plausible guesses rather than genuine comprehension.

| Aspect | Human | AI (NLP Model) |

|---|---|---|

| Nuance | Deep, context-rich | Shallow, often missed |

| Context | Multi-layered, flexible | Narrow, window-limited |

| Emotion | Intuitive, embodied | Pattern-matched, simulated |

| Bias Detection | Sometimes, self-aware | Blind to subtle context |

| Improvisation | Creative adaptation | Statistical extrapolation |

Table 1. Human vs. AI language comprehension. Source: Original analysis based on Nature Scientific Reports, 2024, Medium, 2024.

Because machines don’t “mean” anything, their mistakes can be catastrophic: a translation gaffe in a medical setting, a chatbot misunderstanding a crisis, or generative AI spewing plausible-sounding nonsense. According to Nature Scientific Reports (2024), empirical tests show that the myth of machine “understanding” collapses under real-world ambiguity and irony.

Debunking the AI language myth

The “AI gets language” myth is one of the biggest lies sold by Silicon Valley. Here’s what most get wrong:

- AI understands sarcasm and humor (spoiler: it doesn’t, not reliably).

- NLP is unbiased because it’s “just math.”

- Bots are sentient or emotionally aware.

- Language models can distinguish truth from fiction.

- More data always equals better understanding.

What current AI does: It predicts the next likely word or phrase given a prompt, based on statistical patterns learned from massive datasets. What it can’t do: Grasp intent, context, or subtext outside its training window.

"Most bots are just really good at guessing, not understanding." — Alex

So, while AI models are dazzling in speed and scale, their “language” is a mask—not a mind.

From cave walls to code: a brief (and wild) history of language tech

The first language hacks

Long before your phone autocorrected your texts, humans were hacking communication. The earliest language tech? Cave paintings—symbols that compressed stories into marks. From there, every leap in human history came with new ways to encode, transmit, and manipulate language.

- Cave paintings (c. 40,000 BCE): First attempts at externalizing thought.

- Written alphabets (c. 3,000 BCE): Cuneiform, hieroglyphs—standardizing meaning.

- Printing press (1440 CE): Mass distribution, literary standardization.

- Telegraph (1837): Language goes electric—condensing information across continents.

- Turing Test (1950): Can machines “fool” us into thinking they understand?

- ELIZA chatbot (1966): Early NLP using pattern-matching scripts.

- Statistical NLP (1990s): Computers start learning from data, not rules.

| Year | Innovation | Impact |

|---|---|---|

| 40,000 BCE | Cave paintings | Birth of symbolic communication |

| 3,000 BCE | Alphabets | Codified language, enabled laws and literature |

| 1440 CE | Printing press | Knowledge democratized, language standardized |

| 1837 | Telegraph | Instant, long-distance text communication |

| 1950 | Turing Test | Benchmark for machine “intelligence” in conversation |

| 1966 | ELIZA chatbot | Psychological illusion of machine dialogue |

| 1990s | Statistical NLP | Data-driven language processing emerges |

Table 2. Timeline of key advances in language technology. Source: Original analysis based on historical milestones and Bram Adams, 2024.

The computer revolution: when language met silicon

The digital era didn’t just accelerate communication; it transformed its DNA. Early computational linguists tried to make sense of grammar with rules and logic. These hand-coded systems were brittle, failing at anything outside textbook examples. As the internet exploded, so did the volume and diversity of language data. Suddenly, slang, memes, and dialects overwhelmed the old rulebooks, requiring new approaches.

First-gen NLP tools struggled with ambiguity—mixing up “bass” the fish with “bass” the instrument, for example. The gap between how humans improvise and how machines process remained a chasm.

Machine learning changes the rules

The pivot to machine learning—especially neural networks—was the single biggest upheaval since Gutenberg. Instead of static rules, algorithms now gobbled up massive text corpora, learning to mimic speech patterns through “deep learning.” The rollout of LLMs (like GPT-4) meant that machines could compose emails, poems, even code, in seconds.

"We taught machines to talk, but we never taught them to listen." — Jordan

These models became cultural phenomena—fueling art, controversy, and moral panic. But the black box deepened: nobody could fully explain why a model chose one phrase over another. The more “natural” machine language sounds, the more dangerous its errors become.

Inside the black box: how natural language processing really works

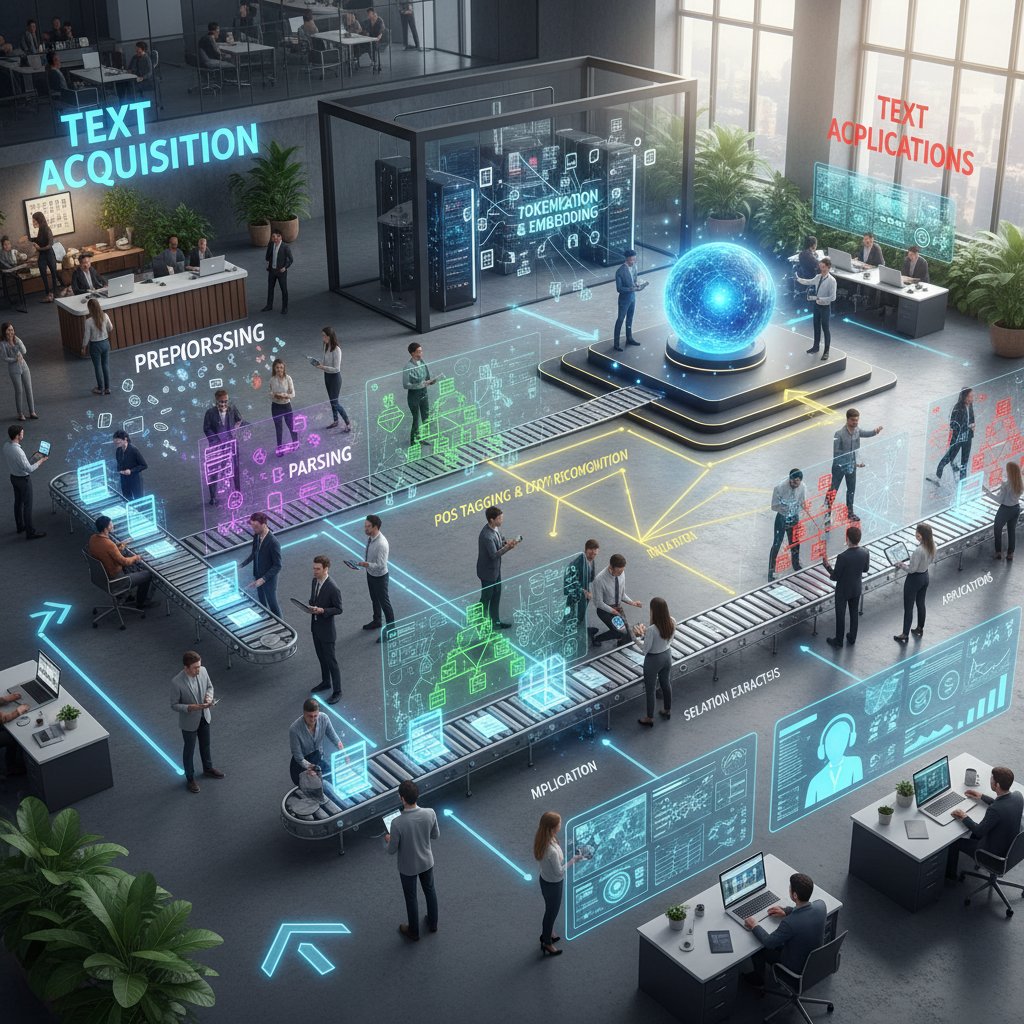

Breaking down the NLP pipeline

Natural language processing is a beast—a complex sequence of steps that tries to make sense of the wild mess that is human speech.

- Tokenization: Slicing input text into words, sentences, or sub-word units called tokens.

- Parsing: Analyzing grammatical structure—what’s the subject, what’s the verb?

- Semantic analysis: Assigning meaning to words and their relationships.

- Intent detection: Guessing what the user really wants.

- Generation: Producing fluent, plausible responses.

Breaking text into discrete units (tokens) for processing.

The AI’s attempt to extract meaning and intent from language.

Producing coherent text based on context and prompts.

A neural network architecture that revolutionized NLP by enabling massive parallel processing of text.

Numeric representations of words capturing meaning and relationships.

Each step can introduce errors, amplifying misunderstandings like a game of digital “telephone.”

Why context is the holy grail (and still out of reach)

The biggest Achilles’ heel of NLP? Context. Human language is loaded with subtext, cultural cues, and personal histories. But most AI only sees a “context window”—a fixed chunk of text. Anything outside that vanishes.

Real-world example: In 2024, a major translation AI failed to catch the double meaning in a political slogan, triggering a diplomatic incident. Chatbots routinely miss sarcasm or inside jokes, leading to either flat or inappropriate responses.

Famous NLP failures due to missing context:

- Automated customer support mistaking distress for routine queries.

- Translation engines butchering idioms (“kick the bucket” rendered literally).

- News summarizers misrepresenting tone, flipping facts on their head.

Despite billions of parameters, AI still chokes on the nuances people take for granted.

The data dilemma: training, bias, and garbage in, garbage out

Every AI model is only as smart—and as ethical—as the data it’s fed. If a dataset is skewed, the outputs will be too. In 2024, high-profile AI tools were caught echoing racist, sexist, or simply outdated assumptions because their training data reflected existing societal biases.

| Scenario | Bias Type | Real-World Impact |

|---|---|---|

| Resume screening AI favors “white names” | Racial bias | Qualified candidates excluded from hiring |

| Gendered language in translation | Gender bias | Reinforcement of stereotypes in global media |

| Predictive policing chatbots | Socioeconomic bias | Targeted communities over-policed |

Table 3. Examples of biased language data and AI errors. Source: Original analysis based on Nature Scientific Reports, 2024, Medium, 2024.

Fixing AI bias means going to the root—rethinking the sources, filtering, and auditing of every line of training data.

Real-world NLP: where you meet natural language every day

Your daily AI: from voice assistants to social feeds

Natural language technology is now baked into your daily routine, whether you notice or not. Every time you ask Siri for weather, search Google, or skim your social feed, an NLP model is shaping what you see, hear, and read. These systems decide which ads you get, which messages get flagged or promoted, and even which friends’ posts you see first.

NLP subtly shapes your experience—nudging you toward certain choices and even altering how you communicate. According to Ipsos, 2023, more than 70% of Americans now interact with at least one AI-powered language tool daily.

Natural language in the driver's seat: cars that talk and listen

The automotive world is ground zero for next-gen language tech. Virtual assistants inside cars aren’t just novelties—they’re becoming essential safety and convenience features. Leading resources like futurecar.ai provide guidance on evaluating these systems.

Voice control varies wildly: Tesla’s system may interpret “open the glove box” perfectly, while older models fumble with anything but the most literal command. Reviewers note that BMW’s assistant excels at navigation, while Ford’s stands out for entertainment controls.

Steps to evaluate a car’s NLP system before buying:

- Test voice commands for navigation, climate, and media.

- Try ambiguous or slang phrases to gauge flexibility.

- Check response speed and error handling.

- Assess noise filtering in real-world driving.

- Ask about update frequency and security.

Don’t just trust the marketing—put these systems through their paces before you drive off the lot.

When NLP goes wrong: failures, flubs, and fiascos

Some of the biggest AI embarrassments have come from botched language processing. In 2024, a major airline’s chatbot infamously offered “free trips for complaints,” misunderstanding customer requests. Translation apps still mangle idioms and legal documents, leading to costly confusion.

The biggest public embarrassments in AI language gone wrong:

- Chatbots giving bizarre or offensive responses on social media.

- Automated translations turning official speeches into jokes.

- Voice assistants dialing emergency services by mishearing commands.

Each failure teaches the same lesson: real-world language is messy, and even the best AI isn’t immune to human error—just faster at making it.

Ongoing challenges include refining intent recognition, better handling of edge cases, and transparent error reporting.

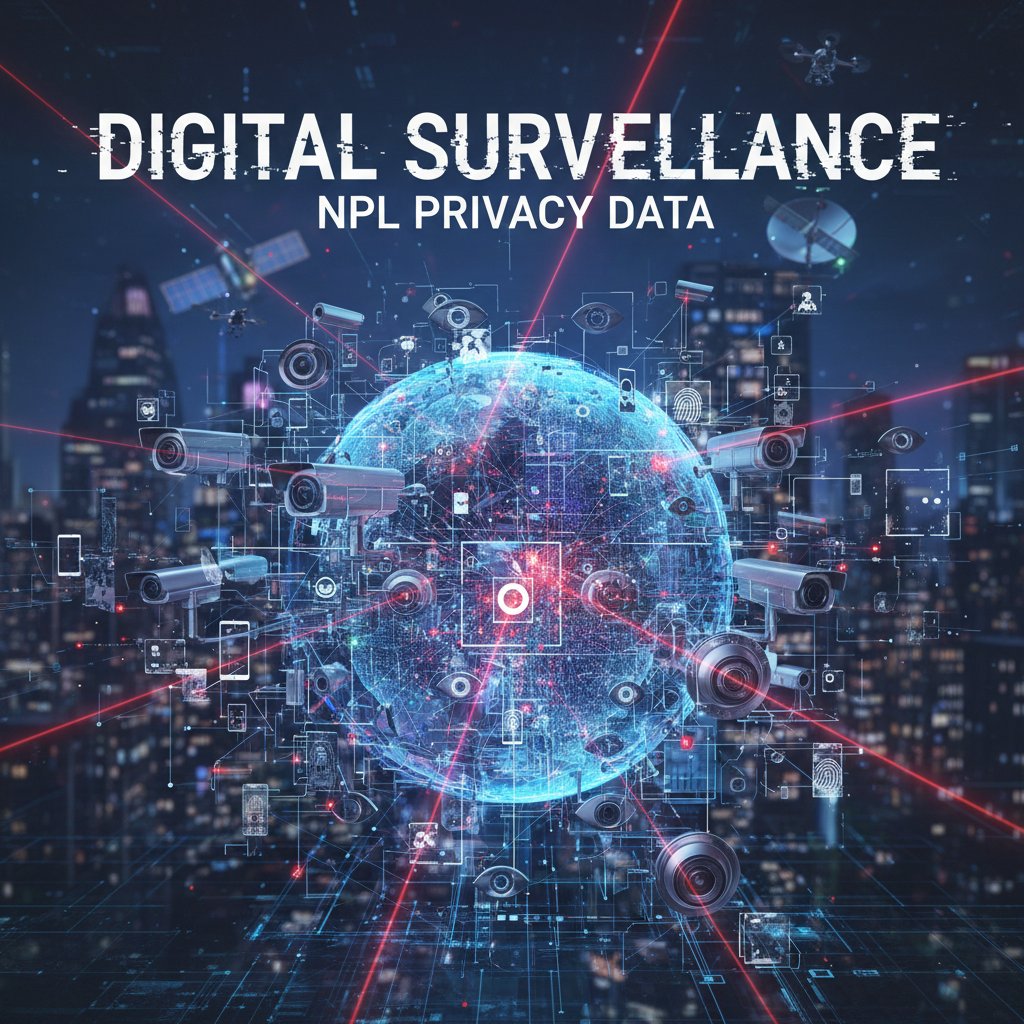

Who controls the conversation? Power, privacy, and manipulation

Language as a weapon: NLP and information warfare

If you think language tech is neutral, think again. NLP is now a battlefield for influence, propaganda, and manipulation. Automated bots flood social platforms with hyper-targeted messaging during elections, while deepfake audio and text blur the line between truth and fiction.

| Tool | Target | Intended Effect | Example |

|---|---|---|---|

| Deepfake audio | Voters | Confuse, mislead, or sway decisions | Fake candidate speeches |

| Bot armies | Social users | Amplify or suppress viewpoints | Hashtag hijacking during protests |

| Sentiment mining | Consumers | Nudge purchasing decisions | Micro-targeted ads based on message tone |

Table 4. Ways NLP can be weaponized. Source: Original analysis based on expert commentary from Medium, 2024 and Eric Koziol, 2024.

The ethical implications? Staggering. Language-driven attacks erode trust, polarize societies, and threaten democracy itself.

The surveillance problem: data, privacy, and consent

NLP’s hunger for data has made privacy a casualty. Every voice command, search term, and chat log becomes fodder for models. Most users never consent to the true scope of this surveillance.

Legal frameworks are scrambling to catch up. Debates rage over who owns “your” words once they’re fed into a training set. Consent is often buried in fine print, leaving users exposed. According to privacy watchdogs, too many NLP systems are black boxes, making it impossible to audit how data is collected, used, or sold.

Algorithmic bias and the fight for fair language

NLP can amplify the worst in us if unchecked. From job application filters sidelining minority candidates to hate speech detection failing in non-English languages, bias is endemic.

Activists and researchers are pushing back: auditing data sources, demanding transparency, and building open-source “fair language” models that resist manipulation.

"If you don’t fix the data, you can’t fix the future." — Sam

But the fight is ongoing—until the incentives for profit and power change, bias is more feature than bug.

How to make natural language work for you: practical applications and power moves

Mastering NLP for work and play

You don’t have to be a coder to harness NLP. Whether automating emails, brainstorming ideas, or managing tasks, NLP-powered tools can supercharge productivity and creativity.

Step-by-step guide to leveraging NLP in daily tasks:

- Use AI assistants for scheduling and reminders.

- Employ text summarizers to digest long reports.

- Harness chatbots for quick answers or troubleshooting.

- Deploy voice search for hands-free efficiency.

- Experiment with AI writing tools for content drafts.

- Integrate translation apps for cross-border communication.

Integrate these tools with your existing workflow to cut through noise and focus on what matters.

Red flags and hidden benefits: what the experts won’t tell you

Every NLP system hides both risks and gems. Underreported challenges include “hallucinated” facts, erosion of privacy, and subtle manipulation. But there are perks: personalized learning, accessibility for disabled users, and faster discovery of trends.

Hidden benefits and red flags in everyday NLP use:

- Benefit: Autocompletion speeds up communication, reducing fatigue.

- Red flag: Overreliance can dull critical thinking or creativity.

- Benefit: Sentiment analysis helps brands respond faster.

- Red flag: These systems may misread irony or nuance, fueling PR crises.

- Benefit: Automated translation bridges cultures.

- Red flag: Persistent errors can erode trust or cause harm in sensitive settings.

To maximize the good and avoid the bad: always verify automated outputs, rotate between tools, and stay vigilant about privacy.

DIY NLP: tools, tricks, and hacks

Getting started with NLP experimentation is easier than you think. Platforms like HuggingFace, Google Colab, and spaCy offer beginner-friendly entry points. The real mistake? Diving in without understanding the data, limitations, or security implications.

Checklist for evaluating new NLP apps and platforms:

- Is the training data transparent and bias-audited?

- Does the provider disclose privacy practices?

- Is there a human-in-the-loop for error correction?

- How often is the model updated?

- Can users export or delete their data?

Stay skeptical and curious: the most powerful NLP hacks are the ones that keep you in control.

The future of natural language: what comes after words?

Beyond text: voice, emotion, and the rise of multimodal AI

Language is no longer just about words. Advances in speech recognition, sentiment detection, and “multimodal” AI (combining text, voice, and images) are transforming how we interact with machines.

Current systems can “read” tone, detect stress, and even infer emotion—sometimes better than humans in controlled settings. This shift promises richer, more intuitive interfaces but raises fresh questions about privacy, consent, and manipulation. The lines between natural and synthetic conversation are blurring with every new breakthrough.

Will machines ever ‘get’ us? The next frontiers

The hardest unsolved problems in NLP remain: genuine understanding, common sense, and world knowledge. Even the most advanced models are confounded by out-of-context questions or cultural references. Experts suggest that bridging this gap may require entirely new paradigms—hybrid systems that integrate language with perception and action.

"Understanding isn’t just words—it’s world knowledge." — Taylor

Breakthroughs or not, machines are getting better at simulating fluency. But “getting” us—feeling, reasoning, improvising—remains a human domain for now.

How to stay ahead: futureproofing your language skills

AI won’t kill language, but it will force us to adapt. To thrive, develop “AI-resilient” communication skills: clarity, originality, and critical reasoning.

Steps to develop AI-resilient communication skills:

- Practice clear, context-rich expression—avoid jargon and ambiguity.

- Cross-check automated outputs; don’t trust, verify.

- Cultivate empathy and cultural awareness—machines can’t fake this.

- Learn to “prompt” AI tools effectively for better results.

- Invest in adjacent skills: data literacy, visual storytelling, and negotiation.

The winning edge? Blending what makes you human with what makes machines efficient.

Debunked: the biggest misconceptions about natural language and AI

AI can read your mind (and other tall tales)

Let’s bust the myths that just won’t die:

- AI can anticipate your every need (it can’t, it echoes your past).

- Bots are unbiased (their output mirrors their input).

- NLP tools always know what you mean (context is often missing).

- Machines “feel” emotions (they simulate, not experience).

- More data equals smarter AI (quantity ≠ quality).

These misconceptions persist because of slick marketing, misunderstandings about how AI works, and wishful thinking.

The truth about AI creativity and originality

Can AI create truly original language? The evidence says: not quite. AI-generated poems or stories may pass a quick test, but deeper reading reveals repetition, cliché, or lack of genuine surprise.

| Criteria | AI | Human | Verdict |

|---|---|---|---|

| Novelty | Recombines old patterns | Invents new forms, breaks rules | Edge: Human |

| Emotional depth | Simulated, surface-level | Lived, nuanced | Edge: Human |

| Humor | Often misses nuance | Intuitive, layered | Edge: Human |

| Consistency | Tireless, on-demand | Variable, creative lapses | Edge: AI (for volume, not quality) |

Table 5. Side-by-side comparison of AI vs. human creative output. Source: Original analysis based on expert commentary and Nature Scientific Reports, 2024.

Humans break the mold—AI rearranges it.

Adjacent frontiers: when natural language meets other domains

Natural language in healthcare, law, and activism

NLP is reshaping how professionals diagnose, litigate, and mobilize. In healthcare, AI triages patient messages, surfaces drug interactions, and summarizes records. In law, NLP tools scan thousands of documents for precedent in seconds. Activists use sentiment analysis to track public opinion and spot disinformation campaigns.

Real-world cases:

- In 2024, a US legal firm used NLP to cut research time by 60%, freeing lawyers for higher-impact work.

- An activist group in India deployed chatbots to counter misinformation, reaching over 1 million rural users.

- A hospital in Germany reduced patient triage wait times by 40% using AI-powered message sorting.

Priority checklist for ethical NLP deployment in sensitive fields:

- Transparent data sources and audit trails.

- Explicit consent for data usage.

- Human oversight for automated decisions.

- Regular bias and fairness audits.

Automotive AI and the road ahead

Voice and NLP are revolutionizing the driving experience. Gone are the days of clunky voice controls—today’s best systems let you manage navigation, entertainment, and calls seamlessly. futurecar.ai is a go-to resource for unbiased advice on smart automotive assistants and how they compare.

Strengths:

- Hands-free operation enhances safety.

- Multi-lingual support bridges global markets.

- Integration with other smart devices for a unified experience.

Weaknesses:

- Noise and accent issues can stymie recognition.

- Limited by manufacturer updates and data privacy policies.

- Sometimes overpromise and underdeliver on “AI” capabilities.

Culture clash: language, identity, and globalization

NLP is a double-edged sword for culture. On one hand, it can preserve endangered languages, making them accessible and “machine-readable.” On the other, it’s often used to flatten local identities—forcing speakers into English or majority tongues.

Examples:

- In 2023, an AI translation project in Africa struggled, misinterpreting local dialects and customs, leading to social backlash.

- Major platforms’ content moderation algorithms flagged Indigenous language posts as “nonsense,” silencing voices.

- A grassroots NLP initiative in Wales boosted Welsh-language content, reversing digital decline.

"Every word we automate is a piece of culture we risk losing." — Lee

Preserving linguistic diversity means building tech that adapts to people, not the other way around.

Glossary: decoding the jargon of natural language

A field guide to natural language terms

The field of computer science focused on enabling machines to “understand” and generate human language.

Subset of NLP: extracting intent and meaning from speech or text.

Subset of NLP: producing coherent language output.

A large, structured collection of texts used for training AI models.

A neural network architecture (e.g., GPT-4) that processes language in parallel, enabling state-of-the-art performance in NLP.

The underlying goal or meaning behind a user’s message.

Specific objects, names, or concepts recognized in text.

The amount of prior information an AI model can “see” when generating or analyzing text.

Search engines that look for meaning, not just keywords.

Systematic errors or prejudices in data or models.

Mastering this vocabulary isn’t just for techies. In an AI-driven world, knowing the language of language is power—protecting you from manipulation and keeping you sharp.

Understanding natural language means understanding the tools, tricks, and traps that define the modern digital experience.

Conclusion: language, power, and the future we’re writing

Synthesis: what we’ve learned (and what you should do next)

Natural language isn’t a gift—it’s a battlefield. Its evolution, from cave walls to neural nets, is a story about power, technology, and the relentless search for meaning. The brutal truths? Machines don’t “get” us, language is never neutral, and every word you use is a data point in someone’s model. Human communication is richer than any algorithm, and, for now, true understanding belongs to us.

The way natural language is digitized, shaped, and weaponized reflects larger upheavals—political, psychological, and cultural. The price of ignoring these realities is high: lost opportunities, compromised privacy, and a future written by someone—or something—else.

So, the final provocation: Are we teaching machines to speak, or are they teaching us to listen differently? The answer isn’t simple, but the responsibility is. Stay vigilant, stay informed, and leverage resources like futurecar.ai to keep your edge in a world where every conversation matters.

Sources

References cited in this article

- Medium: 11 Brutal Truths I Learned in 2024(medium.com)

- Bram Adams: Predictions for 2024(bramadams.dev)

- Ipsos: Americans Say 2023 Was a Good Year(ipsos.com)

- Gartner: Hype Cycle for Natural Language Technologies, 2024(gartner.com)

- EMNLP 2023: Communication Breakdowns(aclanthology.org)

- Scientific Reports: AI Insensitivity to Meaning(nature.com)

- World Economic Forum: Language Diversity at Risk(weforum.org)

- Nature: Industrial Applications of LLMs(nature.com)

- MIT Press: Cognitive Plausibility in NLP(direct.mit.edu)

- DATAVERSITY: 2024 Predictions in AI and NLP(dataversity.net)

- Medium: AI and NLP in Transition(medium.com)

- VentureBeat: The 6 AI Debates Shaping Enterprise Strategy in 2024(venturebeat.com)

- Reuters: OpenAI and Others Seek New Path(reuters.com)

- MIT Technology Review: Small Language Models(technologyreview.com)

- Wikipedia: History of Artificial Intelligence(en.wikipedia.org)

- Computer History Museum(computerhistory.org)

- Atlantis School of Communication: History of Writing(atlantisschoolofcommunication.org)

- Wikipedia: History of Writing(en.wikipedia.org)

- Europeana: The Computer Revolution(europeana.eu)

- Medium: AI for NLP in 2024(medium.com)

- BlackboxNLP 2023(blackboxnlp.github.io)

- AIMultiple: NLP Use Cases(research.aimultiple.com)

- IABAC: Building NLP Pipelines(iabac.org)

- Analytics Vidhya: NLP Learning Path(analyticsvidhya.com)

- Payoda Technology Inc.(payodatechnologyinc.medium.com)

- eMarketer: Voice Assistant User Forecast 2024(emarketer.com)

- We Are Social: Digital 2024(wearesocial.com)

- CNET: Alexa Privacy Survey(cnet.com)

- NLP-MisInfo 2023(sites.google.com)

- Reddit: NLP Researcher Discussions(reddit.com)

- Deqode: NLP Trends 2024(deqode.com)

- CSU Long Beach: AI Privacy Concerns(csulb.edu)

- IBM: Exploring Privacy Issues in the Age of AI(ibm.com)

- Cloud Security Alliance: AI and Privacy 2024-2025(cloudsecurityalliance.org)

Find Your Perfect Car Today

Join thousands making smarter car buying decisions with AI

More Articles

Discover more topics from Smart car buying assistant

Muscle Cars in 2026: Myth, Money, and the Fight to Stay Alive

The word “muscle car” crackles with gasoline-soaked nostalgia—chrome beasts snarling down midnight streets, the rebuke of polite suburbia, the raw power of

MSRP Price Decoded: the Hidden Game Rigging Your Next Car Deal

Msrp price exposed: Discover the hidden realities of sticker prices, negotiation tactics, and why 2026 could change how you buy cars forever. Don't get played—read now.

Mpge Ratings Vs Reality: What Your EV Efficiency Label Hides

Discover insights about mpge ratings

Mpg Highway Vs Reality: the 2026 Numbers That Actually Matter

Mpg highway isn’t what you think—discover the shocking reality, game-changing tips, and the 2026 cars that actually deliver on their promises. Read before you buy.

Mpg Combined Vs Reality: the Number Costing Drivers Thousands

Mpg combined decoded: Discover the real meaning, hidden costs, and how to outsmart the system. Don’t buy a car until you read this insider’s guide.

Mpg City Is Lying to You: Real Urban Mpg Vs the Sticker Rating

Discover insights about mpg city

Mountain Car Features That Actually Matter When the Road Turns Deadly

Discover the 11 must-have upgrades for conquering tough terrain in 2026. Uncover expert insights, hidden pitfalls, and real-world comparisons—read before your next adventure.

Motion Sensors in 2026: Smarter Protection or Silent Surveillance?

Discover insights about motion sensors

Most Reliable Cars 2026: Data, Myths and the Real Safe Bets

Discover insights about most reliable cars

Most Reliable Car Brands 2026, Beyond the Myths and Rankings

Discover insights about most reliable car brands

Most Expensive Cars to Maintain — and the Traps You Can Avoid

Most expensive cars to maintain revealed. Dive into the hidden costs, surprise bills, and expert tips to avoid the biggest money traps. Read before you buy.

Moonroof Types Decoded: the Real Costs, Risks and Payoffs

Moonroof types—explained. Discover the overlooked pros, cons, and secrets behind every moonroof option. Get the insider’s guide before you buy.